With most of the preliminaries over, we have one more task before us: a brief introduction to deception networks. Over the remaining several posts following this one, I'll integrate digital forensics and we'll look at some use cases. First, though, we need to get an idea of what I mean by a deception net.

First, what a deception network isn't. It is not a honeypot or honeynet. While it is true, of course, that deception nets evolved from honeypots, today's deception network is far and beyond that rather primitive technology. In fact, if you start with a honeypot, you'll have a bit of a trek to get in the neighborhood of today's deception networks driven by Machine Learning (ML).

The main difference is that a deception network learns its host environment—network, users, email systems, devices, active directory (AD), etc.—and integrates itself into the host seamlessly. It also raises, through its ML-driven discovery and configuration, the probability that an attacker will interact with a decoy before interacting—or attempting to interact—with a real device, IP, user, or other part of the enterprise. It is this interaction that labels the actor as an intruder and methodically guides him or her away from real assets and into a sinkhole, gathering intelligence all along the way.

So, at this point, we need a couple more concepts to understand what's going on under the covers. Those concepts are emergence and entropy. These can become extremely complicated when used in research by mathematicians, physicists, and social scientists, but for our purposes we'll just scratch their surfaces.

Emergence is a property of an entity that occurs when the entity enters another entity and becomes part of it. When an autonomous bot enters the enterprise, it uses its ML capability to learn the enterprise and become a legitimate appearing part of it. It is demonstrating emergent properties. To defend against the invader, our deception must also exhibit emergent properties. As the invader encounters its first decoy, the deception network starts to create a new part of its environment that satisfies the invader; and as the invader moves deeper into the enterprise; the deception ML moves with it, guiding it away from real targets and into the sinkhole.

The second concept, entropy, was described by Charles Shannon in 1948. It, too, is quite complicated, but for our purposes we'll just say that entropy is a measure of randomness. Robert Rosen, a theoretical biologist writing in his book "Essays on Life Itself," said that there is a difference between complexity and complicatedness. If something is complex, it cannot be modeled; thus, it cannot be computed. The obvious example of complexity is a person. While we may be able to anticipate how a person may react in a given situation, we cannot with certainty predict what he or she will do.

With that in mind, the autonomous bot has the goal of emerging into the enterprise and behaving as a legitimate entity that it is mimicking would behave. However, if it tries to mimic a user, it will need to exhibit that user's randomness or entropy. It will not be able to. It must behave predictably, attaching itself to a decoy in the deception net, and its entropy will differ from the entropy of a legitimate entity. The entropy may be high while it is searching for an entry point into the enterprise and become lower as it attaches to the enterprise and begins emergent behavior. All of this activity provides clues that the deception ML can use to identify and quarantine the intruder without the intruder's knowledge.

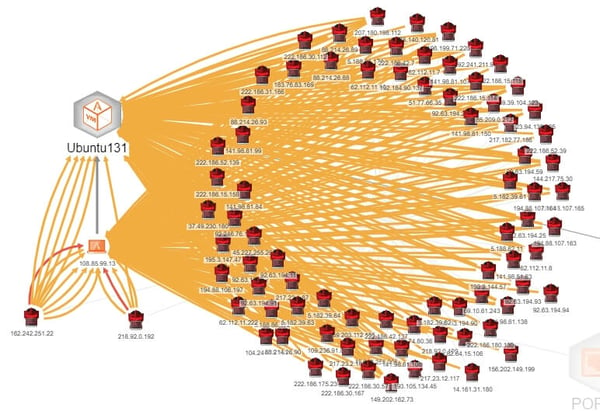

The deception network that I use in my lab is BOTsink from Attivo Networks, and it exhibits all of these characteristics. Here is a simple, entry-level example of my deception network. We'll dig a lot deeper in the coming posts. In this use case—far too simplistic to be of much use by itself, but fine for initial conceptualization—I have placed a decoy (an emulation of a web server in this case) on the internet without a firewall. I have no lures (an actual resource such as a document or other file) for the purpose of this exercise. We'll get into lures in a future post. The decoy, being unprotected, is an immediate target. The image below (Figure 1) shows high or very high severity attacks during the first day.

Figure 1 - Attacks Against a Decoy Web Server

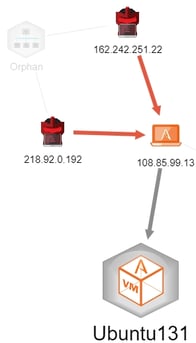

You will note that there are two attackers in the lower left corner that have red links to the victim. Those are very high severity attacks. I can get some forensic details by looking at those attacks, specifically as in Figures 2 and 3.

Figure 2 - Very Severe Attack

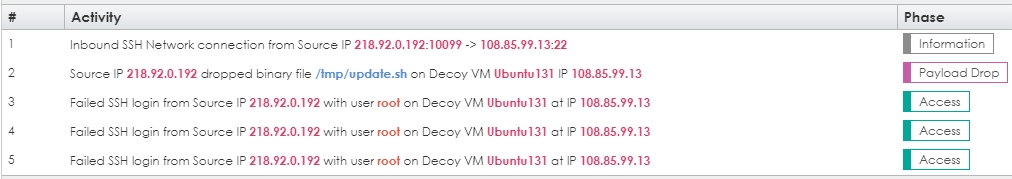

Figure 3 - Session Activity from One of the Attacks in Figure 2

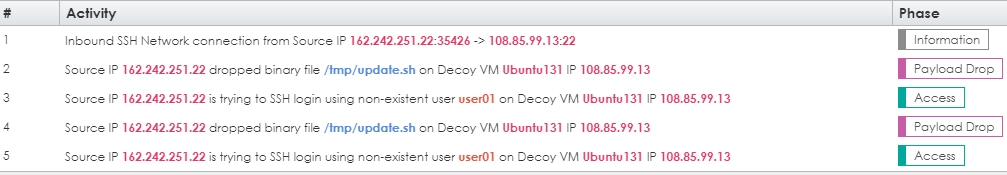

This attack originated from China and it has the footprint of a typical Chinese SSH scan. The attacker should have tried the user admin since it has a trivial password. While this short use case by no means showcases the benefits of a deception network, there are a couple of takeaways. First, the attacker was forced to interact with a decoy. We could do that with many other tools, but we could not identify the intruder for further action should he succeed as we can here. Second, in this case, he tried to act like a legitimate user but did some things we would not expect a legitimate user to do, such as drop a shell script and then try to log in multiple times. Figure 4 shows another example, this time the intruder tries to log in as a nonexistent user. This is an example of high entropy. Should he be successful, the entropy would lower to attempt to match a legitimate user's behavior. We'll see some examples of that in a future post.

Figure 4 - Attempt to Log In as a Nonexistent User

As you can see, we have some easy to use indicators. This decoy took me less than five minutes to set up, and I could go to the decoy itself, log in. and track any related activity. The decoy is a VM, so it does actually exist.

Next time, I'll start looking at the application of forensics in the mix.

~~~

Read the first two installments in this series here:

• Part 1 – Getting Started

• Part 2: Learning the Lingo